-

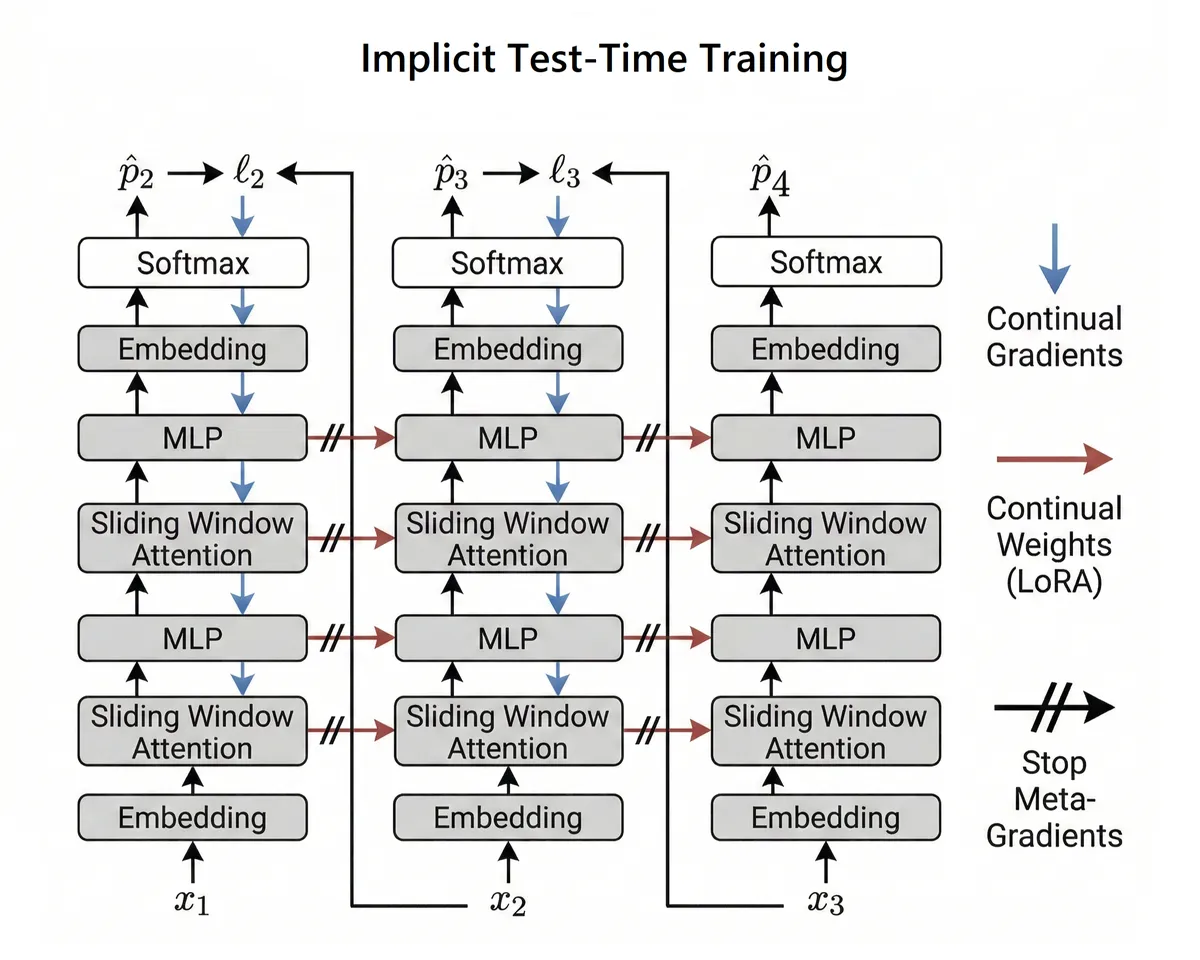

iTTT (WIP)

Implicit Test-Time Training enables sequence modelling with true O(1) training memory (and beats dense attention at long context?). Currently a work in progress.

-

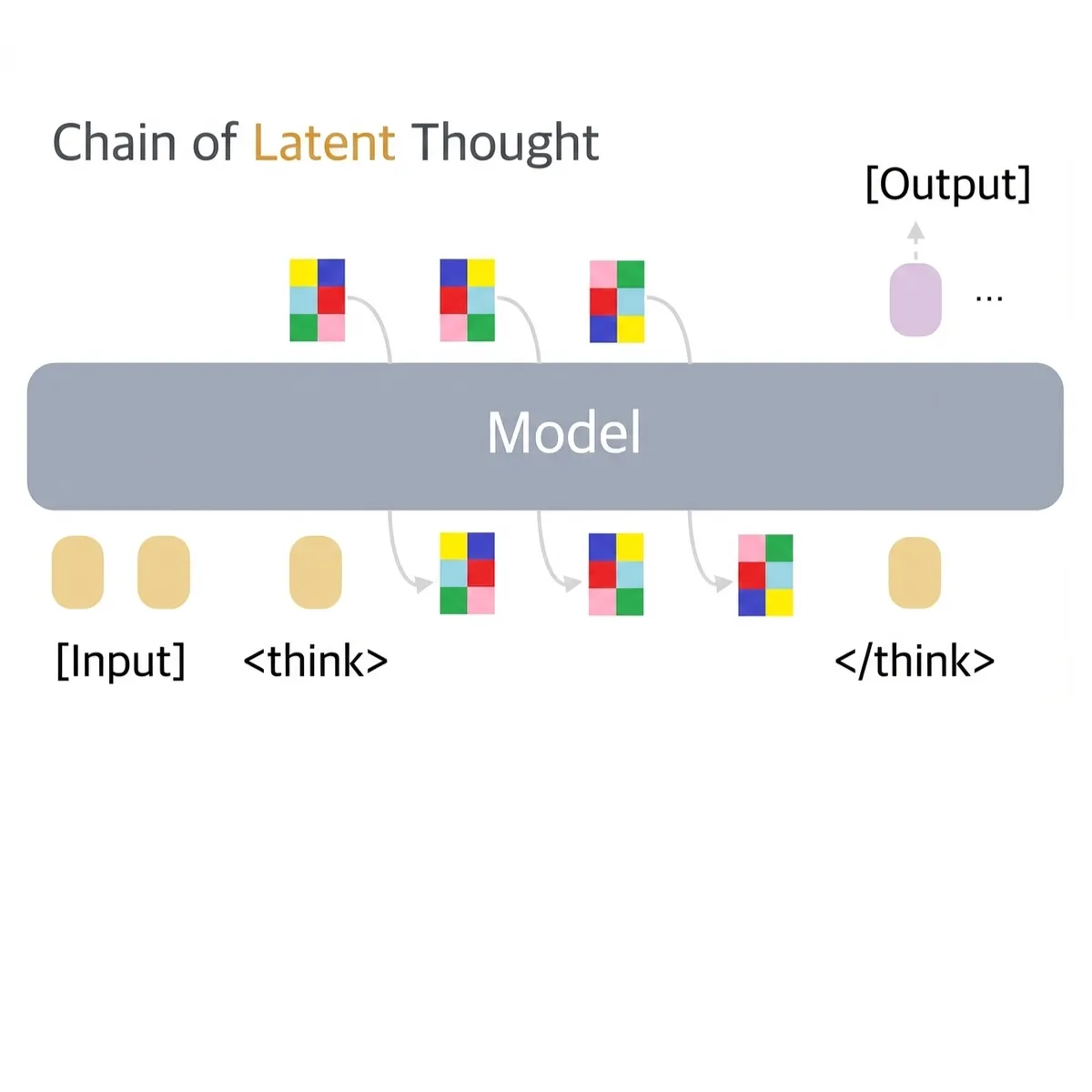

ZEBRA (WIP)

Train parallel and test serial for scalable latent reasoning. Currently a work in progress.

-

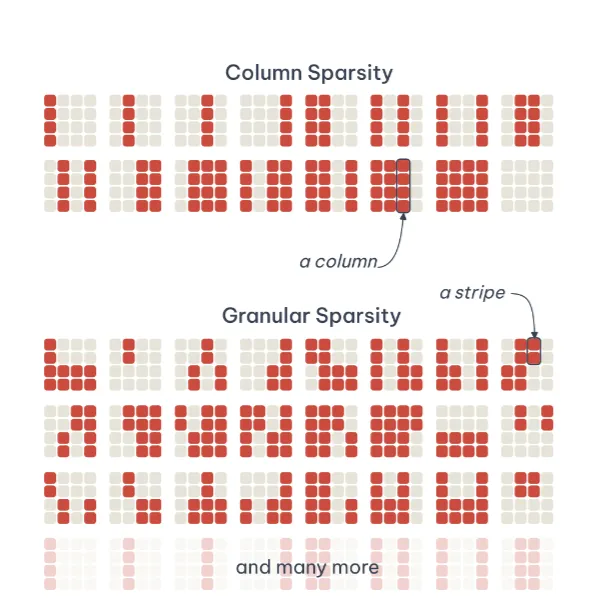

CWIC

Compute Where It Counts. A new state-of-the-art method for creating sparse transformers that automatically decide when to use more or less compute.

-

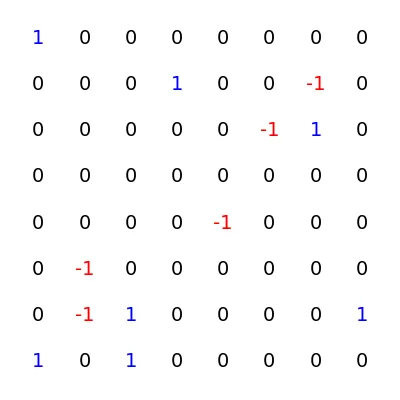

pBit

Enabling both sparsity and low-bit quantization in neural networks using stochastic weights and the local reparameterization trick.

-

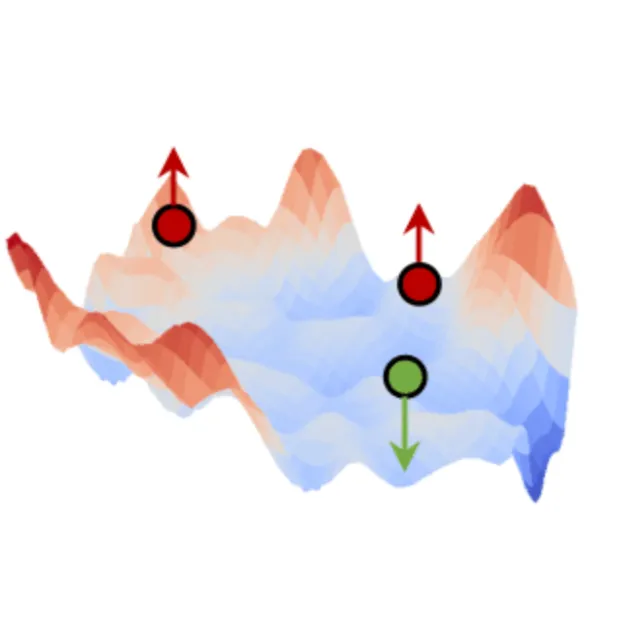

MonArc

Token-level residual energy-based models enable parallelizable pretraining with 2x better data efficiency than standard autoregressive models.

-

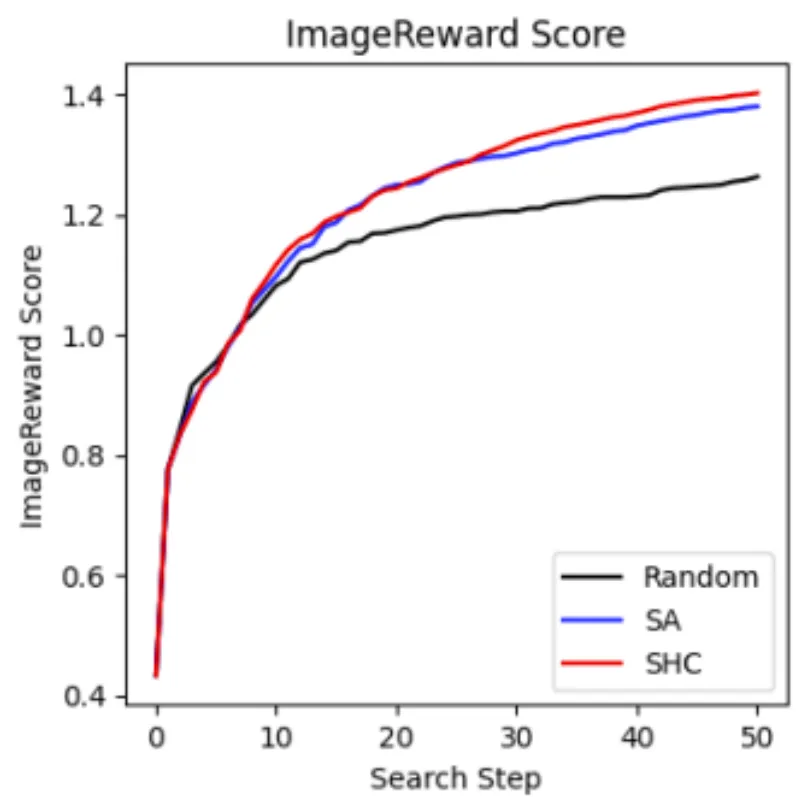

NoiseSearch

Metaheuristic search over diffusion model noise achieves better performance than best-of-N sampling (early work on test-time scaling).